The human interface

For those of you, old enough or unfortunate enough to have used early versions of the Microsoft office suite you will probably remember the 'Mr Clippy' office assistant. This feature, first introduced in Office 97, popped up uninvited from the bottom right of your computer screen every time you typed the letters Dear ..' at the beginning of a document, with the prompt ' it looks like you are writing a letter, would you like help with that?”

Mr Clippy, turned on by default in early versions of Office, was almost universally derided by users of the software and could go down in history as one of machine learning’s first big fails. The Smithsonian Magazine called Mr Clippy “one of the worst software design blunders in the annals of computing”.

So why was the cheery Mr Clippy so hated? Clearly the folks at Microsoft, at the forefront of consumer software development, were not stupid, and the idea that an automated assistant could help with day to day office tasks is not necessarily a bad idea. Indeed later incarnations of automated assistants, the best ones at least, operate seamlessly in the background and provide a demonstrable increase in work efficiency. Consider predictive text. There are many examples, some very funny, of where predictive text has gone spectacularly wrong, but the majority of cases where it doesn't fail it goes unnoticed. It just becomes part of our normal work flow.

At this point we need a distinction between error and failure. Mr Clippy failed because it was obtrusive and poorly designed, not necessarily because it was in error i.e. it could make the right suggestion, but chances are you already know that you are writing a letter. Predictive text has a high error rate, i.e. it often gets the prediction wrong, but it does not fail largely because of the way it is designed to fail unobtrusively.

The design of any system that has a 'tightly coupled human interface', to use systems engineering speak, is difficult. Human behaviour, like the natural world in general, is not something we can always predict. Expression recognition systems, natural language processing and gesture recognition technology, amongst other things, all open up new ways of human machine interaction and this has important applications for the machine learning specialist.

Whenever we are designing a system that requires human input we need to anticipate the possible ways, not just the intended ways, a human will interact with the system. In essence what we are trying to do with these systems is to instil in them some understanding of the broad panorama of human experience

In the first years of the web, search engines used a simple system based on the number of times search terms appeared in articles. Web developers soon began gaming the system, by increasing the number of key search terms. Clearly this would lead to keyword arms race and result in a very boring web. The page rank system, measuring the number of quality inbound links, was designed to provide a more accurate search result. Now, of course, modern search engines use more sophisticated, and secret, algorithms.

What is also important for ML designers is that the co-evolution of humans and algorithms has had an important by-product. A huge data resource that is, in many ways, beginning to distil some of the vastness of human experience. The power of algorithms to harvest this resource, to extract knowledge, insights that would not have been possible with smaller data sets, is massive. So many human interactions are now digitised and we are only just beginning to understand and explore the many ways this data can be used.

As a curious example consider the study 'The expression of emotion in 20th century books (Acerbi et al 2013). Though strictly more of a data analysis study, rather than machine learning, it is illustrative for several reasons. Its purpose is to chart, over time, that most non machine like quality, emotion, extracted from books of the 20th century. With access to a large volume of digitised text through project Gutenberg digital library, WordNet (http://wordnet.princeton.edu/wordnet/) and Googles Ngram database (books.google.com/ngrams), the authors of this study were able to map cultural change over the 20th century as reflected in the literature of the time. They did this by mapping trends in the usage of 'mood' words

For this study the authors labelled each word (a 1gram) and associated it with a mood score and the year it was published. We can see that emotion words, such as joy, sadness, fear and so forth can be scored according to the positive or negative mood they evoke. The mood score was obtained from wordnet (wordnet.princeton.edu). Wordnet assigns an affect score to each mood word. Finally the authors simply counted the occurrences of each mood word.

Here ci is the count of a particular mood word, n is the total count of mood words (not all words just words with a mood score). Cthe is the count of the word 'the' in the text. This normalises the sum, to take into account that some years more books were written (or digitised). Also since many later books tend to contain more technical language the word 'the' was used normalize rather than total word count. This gives a more accurate representation of emotion over long time periods in prose text. Finally the score is normalised according to a normal distribution, Mz, by subtracting the mean and dividing by the standard deviation.

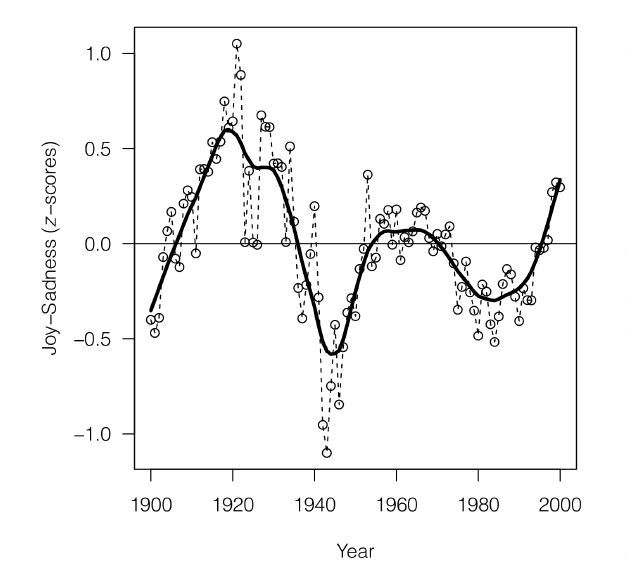

Fig From 'The expression of Emotions in 20th Century Books, (Alberto Acerbi, Vasileios Lampos, Phillip Garnett, R. Alexander Bentley) PLOS

Insert image B05198_01_01.png

Here we can see one of the graphs generated by this study. It shows the joy-sadness score for books written in this period and clearly shows a negative trend associated with the period of world war 2.

This study is interesting for several reasons. Firstly it is an example of data driven science, where previously 'soft' sciences such as sociology and anthropology, are given a solid empirical footing. Despite some pretty impressive results, this study was relatively easy to implement. This is mainly because most of the hard work had already been done by wordnet and Google. This highlights how using data resources freely available on the internet and software tools such as the Pythons' data and machine learning packages, anyone one with the data skills and motivation is able build on this work.